BARDI: An Open-Source Python NLP Text Preprocessing Pipeline

Open-source ML preprocessing framework ensuring reproducible pipelines across research, production, and federated cancer institutions

Project Background

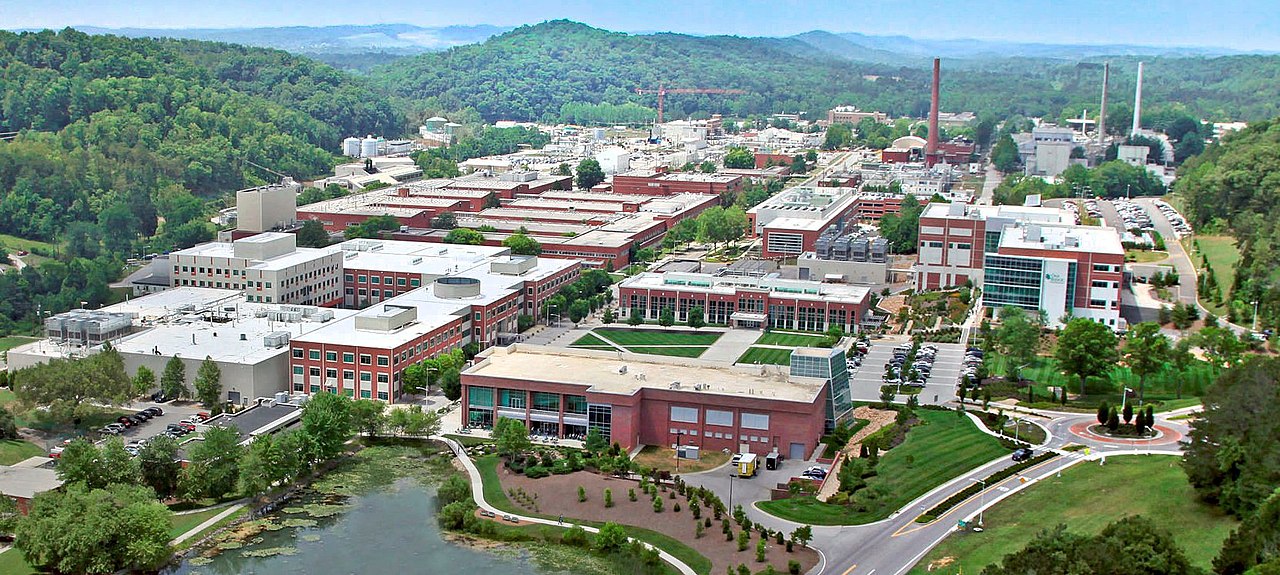

MOSSAIC (Modeling Outcomes Using Surveillance Data and Scalable Artificial Intelligence for Cancer) was a collaboration between the Department of Energy and the National Cancer Institute to enable near real-time cancer surveillance at population scale. The project addressed a critical bottleneck in public health: cancer registries participating in NCI's SEER (Surveillance, Epidemiology, and End Results) program collect population-level data but rely on manual extraction from clinical documents, creating multi-month delays in disease reporting, labor-intensive chart review, and inconsistent data extraction across state registries.

MOSSAIC applied natural language processing and deep learning to automate information extraction from unstructured clinical text like pathology reports, radiology notes, and treatment summaries. The project developed AI models and open-source software to extract structured, standardized cancer data at the speed and scale needed for near real-time surveillance across 11 SEER cancer registries spanning Louisiana, Kentucky, California, Hawaii, and seven other states, each managing their own patient populations and clinical data systems.

BARDI (Batch-processing Abstraction for Raw Data Integration) emerged from immediate reproducibility and deployment challenges within the ORNL research team. Data scientists were preprocessing clinical text inconsistently, making it impossible to compare model results or reproduce findings. More critically, models trained in research notebooks couldn't be deployed to production because preprocessing logic was scattered across different implementations. BARDI solved this by providing declarative pipeline configurations that ensured researchers used identical text cleaning and normalization steps, then allowed those exact pipelines to be packaged into the production API deployed at IMS (Information Management Services), the central repository processing data from state registries. This eliminated the research-to-production gap and established a foundation for sharing preprocessing configurations with individual registries, enabling federated model training while respecting data sovereignty requirements.

BARDI's Purpose

Built on Apache Arrow's columnar memory model and Polars for multithreaded computation, BARDI provided modular NLP-specific components including text normalizers with configurable rules, pre-tokenizers, embedding generators, vocabulary encoders, and label processors. The framework emphasized reproducibility through configuration capture and metadata tracking, explicitly defining every parameter, regex pattern, and transformation so that pipelines could be version-controlled and shared across researchers and institutions. This design enabled ORNL researchers to eliminate preprocessing drift, then seamlessly package research models into production at IMS with zero preprocessing translation. We open-sourced the framework and shared it with SEER cancer registries and other cancer research institutions, allowing them to process their local data using the same preprocessing methods without rebuilding months of data engineering infrastructure.

My Role

I led the design and architecture of BARDI, bringing a focus on efficient data processing and clean software engineering to the MOSSAIC project. Working closely with machine learning engineer Patrycja Krawczuk, I gained deep understanding of the ML workflow and NLP requirements that informed the framework's design. I selected Apache Arrow and Polars for their performance characteristics in large-scale text processing, then co-developed the framework with Patrycja to ensure it met both engineering and research needs.

Technical Implementation

BARDI's architecture centered on a Pipeline class that orchestrated sequences of Step objects, each representing a discrete preprocessing operation. Steps were composable and type-safe, validating input/output schemas at pipeline construction time to catch incompatibilities before execution. The framework used Apache Arrow's columnar format as the canonical in-memory representation, enabling zero-copy data sharing between steps and efficient columnar operations through Polars' lazy evaluation engine.

The data ingestion layer provided unified connectors across multiple formats through a Dataset abstraction. Users could load data via from_file() for Parquet and Arrow files, from_json() for JSON documents, from_pandas() and from_polars() for in-memory dataframes, and from_duckdb() for SQL queries against the project's DuckDB-based data lakehouse. This allowed researchers to work with their existing data storage patterns without reformatting. Output serialization supported the same formats through corresponding write_file() and conversion methods, with DataWriteConfig objects controlling compression, partitioning, and schema enforcement.

The NLP preprocessing components formed the core step library. The Normalizer step applied configurable text cleaning operations including case normalization, whitespace standardization, and regex-based pattern removal. We built domain-specific regex libraries for pathology reports and clinical notes, packaged as reusable RegexSet objects that could be version-controlled and shared. The PreTokenizer handled splitting text into tokens using configurable delimiters and Unicode normalization rules, preparing text for downstream tokenization. The EmbeddingGenerator integrated with sentence transformer models to produce dense vector representations, caching embeddings to disk to avoid recomputation. The VocabEncoder and TokenizerEncoder steps trained vocabulary mappings and tokenizer models on the corpus, then applied those learned transformations consistently to new data. The LabelProcessor handled multi-label classification targets, converting string labels to integer encodings with configurable mapping strategies.

Each step exposed its configuration as a serializable dictionary, capturing hyperparameters, file paths to trained artifacts (vocabularies, tokenizer models), and preprocessing decisions (which regex patterns to apply, normalization rules). Pipeline execution generated a metadata manifest containing the full configuration tree, input/output schemas, execution timestamps, and data lineage. This manifest could be committed to version control alongside model training code, providing the audit trail needed for reproducible research and regulatory documentation. When deploying to production, we serialized the entire pipeline configuration to JSON, which the production API loaded to reconstruct identical preprocessing logic without code dependencies.

Usage

Basic Pipeline Setup

BARDI pipelines are built by composing modular preprocessing steps. Here's a complete example preprocessing clinical text for NLP model training:

import pandas as pd

from bardi import nlp_engineering

from bardi.pipeline import Pipeline

from bardi.data.data_handlers import from_pandas

from bardi.nlp_engineering.regex_library.pathology_report import PathologyReportRegexSet

# Load your data

df = pd.DataFrame([

{

"patient_id": 1,

"text": "The patient presented with notable changes in behavior...",

"label": "positive"

},

# ... more records

])

# Convert to BARDI Dataset

dataset = from_pandas(df)

# Initialize pipeline

pipeline = Pipeline(dataset=dataset, write_outputs=False)

Adding Preprocessing Steps

Text Normalization

Apply regex-based cleaning and standardization to clinical text:

# Use pre-built pathology report regex patterns

regex_set = PathologyReportRegexSet().get_regex_set()

# Add normalizer to pipeline

pipeline.add_step(

nlp_engineering.CPUNormalizer(

fields=['text'],

regex_set=regex_set,

lowercase=True

)

)

Alternatively, create your own reproducible domain-specific text cleaning rules:

from bardi.nlp_engineering.regex_library.regex_set import RegexSet

custom_regex = RegexSet(

name="medical_text_cleaning",

patterns=[

(r'\bpatient\b', 'pt'),

(r'\d{3}-\d{2}-\d{4}', '[SSN]'), # Redact SSNs

(r'Dr\.\s+\w+', '[PHYSICIAN]') # Anonymize names

]

)

Pre-Tokenization

Split text into tokens for downstream processing:

pipeline.add_step(

nlp_engineering.CPUPreTokenizer(

fields=['text'],

split_pattern=' '

)

)

Embedding Generation

Generate dense vector representations using transformer models:

pipeline.add_step(

nlp_engineering.EmbeddingGenerator(

fields=['text'],

model_name='sentence-transformers/all-MiniLM-L6-v2',

batch_size=32

)

)

Vocabulary Encoding

Build and apply vocabulary mappings:

pipeline.add_step(

nlp_engineering.VocabEncoder(

fields=['text'],

unknown_token='<UNK>'

)

)

Label Processing

Encode target labels for classification:

pipeline.add_step(

nlp_engineering.LabelProcessor(

fields=['label'],

multi_label=False

)

)

Running the Pipeline

# Execute all steps

processed_dataset = pipeline.run()

# Access metadata for reproducibility

metadata = pipeline.get_metadata()

# Save pipeline configuration

config = pipeline.to_config()

Saving Configurations

# Save pipeline config for reproducibility

import json

with open('preprocessing_config.json', 'w') as f:

json.dump(pipeline.to_config(), f, indent=2)

Loading Configurations

# Recreate pipeline from config

with open('preprocessing_config.json', 'r') as f:

config = json.load(f)

new_pipeline = Pipeline.from_config(config, dataset=new_dataset)